Small Language Models (SLMs) are reshaping how businesses deploy AI by delivering faster, cheaper, and more controllable intelligence. Unlike large, resource-heavy models, SLMs focus on efficiency, making them practical for real-world applications where speed, cost, and privacy matter most. From on-device assistants in smartphones that operate without internet connectivity to real-time customer support chatbots that reduce operational costs, SLMs are enabling organizations to scale AI in ways that were previously impractical.

As companies prioritize edge computing, data privacy, and predictable infrastructure costs, SLMs have become a strategic alternative to traditional large language models. Their ability to run locally, adapt quickly to domain-specific tasks, and deliver strong performance with fewer resources positions them at the center of modern AI adoption. Let’s explore the latest statistics and trends driving SLM adoption.

Editor’s Choice

- SLMs typically range from 1 million to 10 billion parameters, making them significantly smaller than traditional LLMs.

- Most production-ready SLMs in 2026 fall between 1B and 7B parameters, optimized for efficiency and speed.

- SLM deployment costs are 5x to 20x lower than LLMs in enterprise environments.

- Cloud inference for SLMs costs roughly $0.10–$0.50 per 1M tokens, compared to $2–$30 for LLMs.

- SLMs can deliver 80%–90% of LLM performance on domain-specific tasks after fine-tuning.

- Many SLMs run on a single GPU or even CPUs, enabling edge and on-device deployment.

- Training large models can exceed $100 million, pushing organizations toward smaller, cost-efficient alternatives.

Recent Developments

- In 2026, most AI vendors will release compact model variants (under 10B parameters) alongside flagship models.

- Microsoft’s Phi-3 models (≈3.8B parameters) demonstrate competitive benchmark performance with smaller footprints.

- Google’s Gemma 2 (≈9B parameters) shows strong quality-to-size efficiency ratios for enterprise deployment.

- Meta’s Llama 3.2 includes 1B–3B parameter variants designed for mobile and edge use.

- New SLM architectures prioritize latency reduction and deterministic outputs for production systems.

- Research models like Qwen-2.5-3B achieve ~0.79 accuracy in classification benchmarks with sub-second latency.

- SLM-based evaluation systems reduce inference costs by over 80x and latency by 20x compared to LLM-based methods.

- Edge AI adoption has accelerated, with SLMs enabling offline AI processing on smartphones and embedded devices.

- Enterprises increasingly adopt hybrid architectures where SLMs handle routine tasks while LLMs manage complex queries.

Definition and Overview of Small Language Models

- SLMs are defined as lightweight AI models optimized for efficiency and low-resource environments.

- Typical parameter sizes range from 1M to 10B, depending on the use case.

- Many enterprise SLMs cluster between 1B and 13B parameters for optimal performance.

- SLMs are designed for task-specific optimization rather than broad general intelligence.

- They can run on consumer-grade hardware such as laptops and mobile devices.

- SLMs often use fine-tuning and parameter-efficient training techniques like LoRA to improve performance.

- They are widely used in chatbots, summarization tools, and embedded AI systems.

- SLMs support on-premise deployment, reducing reliance on cloud APIs.

- Their smaller footprint enables faster iteration and customization cycles for developers.

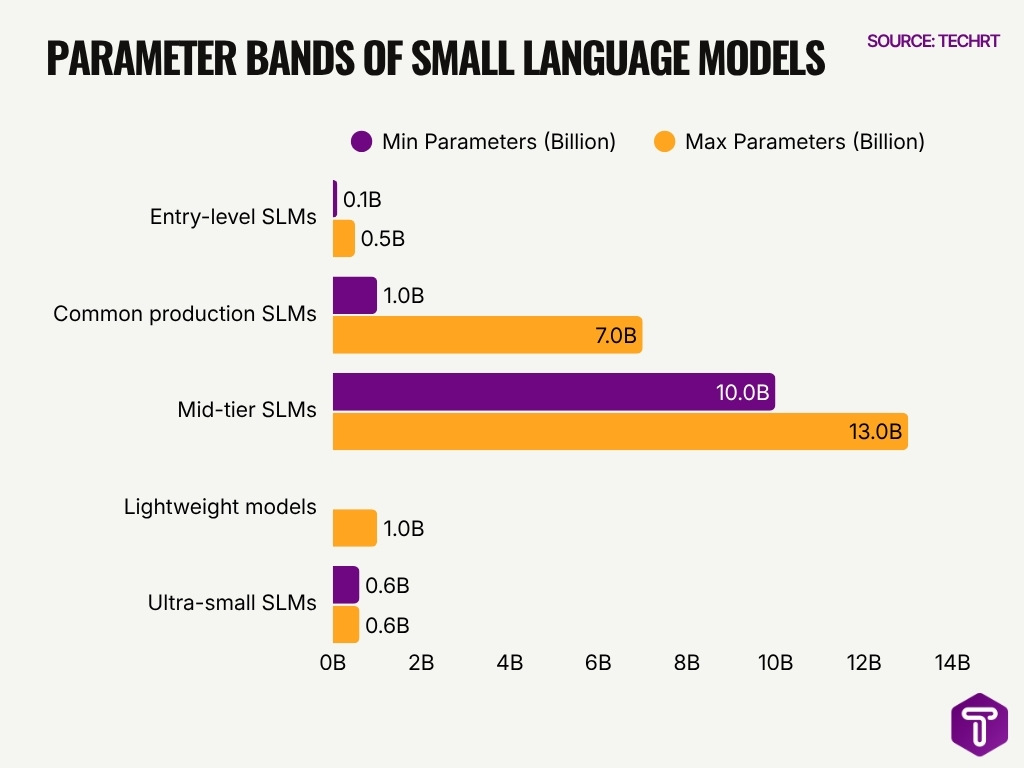

Parameter Size Ranges of Small Language Models

- Entry-level SLMs can be as small as 100M to 500M parameters, suitable for embedded AI.

- Common production SLMs range from 1B to 7B parameters, balancing cost and performance.

- Mid-tier SLMs extend up to 10B–13B parameters for enterprise use cases.

- Some lightweight models operate with under 1B parameters while maintaining usable accuracy.

- SLMs with below 13B parameters can be trained on a single GPU.

- Models like Mistral 7B and Phi-3 demonstrate high efficiency at relatively small sizes.

- Ultra-small SLMs (≈0.6B parameters) still perform basic NLP tasks with acceptable accuracy.

- SLM parameter sizes are often 10x–100x smaller than LLMs, reducing memory and compute needs.

- Smaller models enable quantization (4-bit/8-bit), further reducing resource requirements.

Small Language Models vs Large Language Models

- SLMs typically range from 1B to 15B parameters, while LLMs exceed 100B–1T+ parameters.

- LLM training costs can exceed $100M, compared to significantly lower SLM training costs.

- SLMs offer faster inference speeds and lower latency, making them ideal for real-time applications.

- LLMs excel in complex reasoning and generalization, while SLMs perform well in focused tasks.

- SLM deployment costs are up to 20x lower than LLMs.

- SLMs can run locally on edge devices, while LLMs rely heavily on cloud infrastructure.

- SLMs provide greater data privacy, as data remains on-device.

- LLM inference costs range from $2 to $30 per 1M tokens, far higher than SLM costs.

- SLMs are easier to fine-tune, requiring fewer computational resources and datasets.

Training Data Volume and Token Counts in SLMs

- Most SLMs are trained on 10B to 500B tokens, significantly lower than trillion-token LLM datasets.

- Microsoft Phi-3 models were trained on approximately 3.3 trillion tokens, but optimized subsets enable smaller variants to perform efficiently.

- Typical enterprise SLM fine-tuning datasets range between 1M and 100M domain-specific tokens.

- Smaller models often rely on high-quality curated datasets, reducing the need for massive token counts.

- SLMs trained with synthetic data can reduce dataset size requirements by 30% to 70%.

- Open-source SLMs like Mistral 7B were trained on ~1 trillion tokens, but distilled versions use far fewer tokens.

- Fine-tuning SLMs typically requires less than 1% of the original training data, enabling faster iteration cycles.

- Domain-specific SLMs often use <50B tokens, focusing on accuracy over breadth.

- Token-efficient training methods improve performance by 15%–25% with fewer tokens.

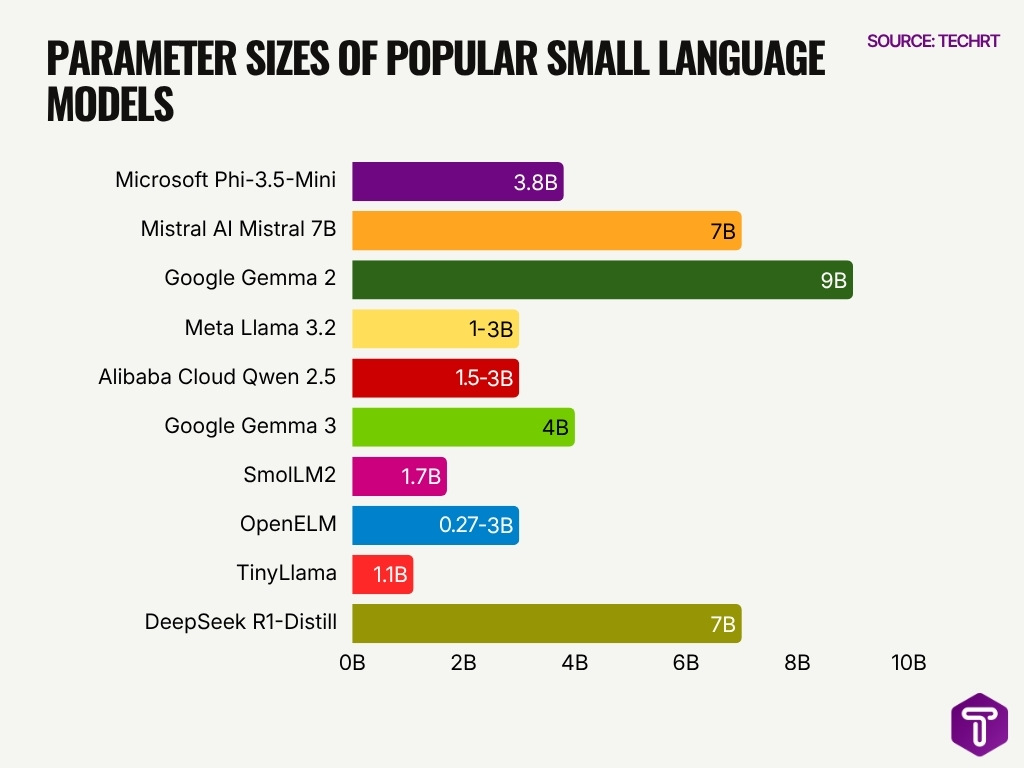

Popular Small Language Models and Parameter Counts

- Microsoft Phi-3.5-Mini utilizes 3.8B parameters for efficient reasoning tasks.

- Mistral 7B remains a widely adopted open-source model with 7B parameters.

- Google Gemma 2 offers a 9B parameter model designed for strong performance efficiency.

- Meta Llama 3.2 provides 1B and 3B parameter variants optimized for mobile and edge deployment.

- Qwen 2.5 delivers extensive multilingual support within compact 1.5B to 3B parameter sizes.

- Gemma 3 features a 4B parameter version specifically built to be both multilingual and multimodal.

- SmolLM2 is a compact model with 1.7B parameters designed for high efficiency on resource-constrained hardware.

- OpenELM maintains a flexible architecture ranging from 270 million to 3B parameters for low-latency needs.

- TinyLlama achieves notable computational efficiency with a total size of 1.1B parameters.

- DeepSeek-R1-Distill includes optimized 7B parameter models specialized for high-performance mathematics tasks.

Performance Benchmarks on General NLP Tasks

- SLMs achieve 80%–90% of LLM accuracy on tasks like summarization and classification.

- Mistral 7B outperforms several larger models on benchmarks despite its smaller size.

- Phi-3 Mini achieves ~69% accuracy on MMLU, comparable to larger models.

- Google Gemma 2 shows strong translation and summarization scores.

- SLMs fine-tuned for sentiment analysis can reach over 92% accuracy.

- On GLUE benchmarks, optimized SLMs achieve scores above 80.

- Distilled SLMs retain 95% of the original model performance while reducing size.

- Lightweight SLMs deliver sub-second response times, improving real-time NLP applications.

- SLMs show higher consistency in deterministic tasks, reducing hallucination rates.

Performance Benchmarks on Reasoning and Coding Tasks

- SLMs like Phi-3 Mini achieve ~48%–55% accuracy on coding benchmarks.

- Mistral 7B scores ~30%–40% on reasoning benchmarks.

- Fine-tuned SLMs improve reasoning accuracy by 20%–35%.

- Code-specific SLMs reach ~53% pass@1 on coding tasks.

- Smaller models show 10%–20% lower accuracy than LLMs in complex reasoning.

- Chain-of-thought prompting improves performance by up to 40%.

- SLMs optimized for coding reduce latency by 50%–70%.

- SLMs perform best in structured coding tasks.

- Hybrid systems improve reasoning accuracy by 25% or more.

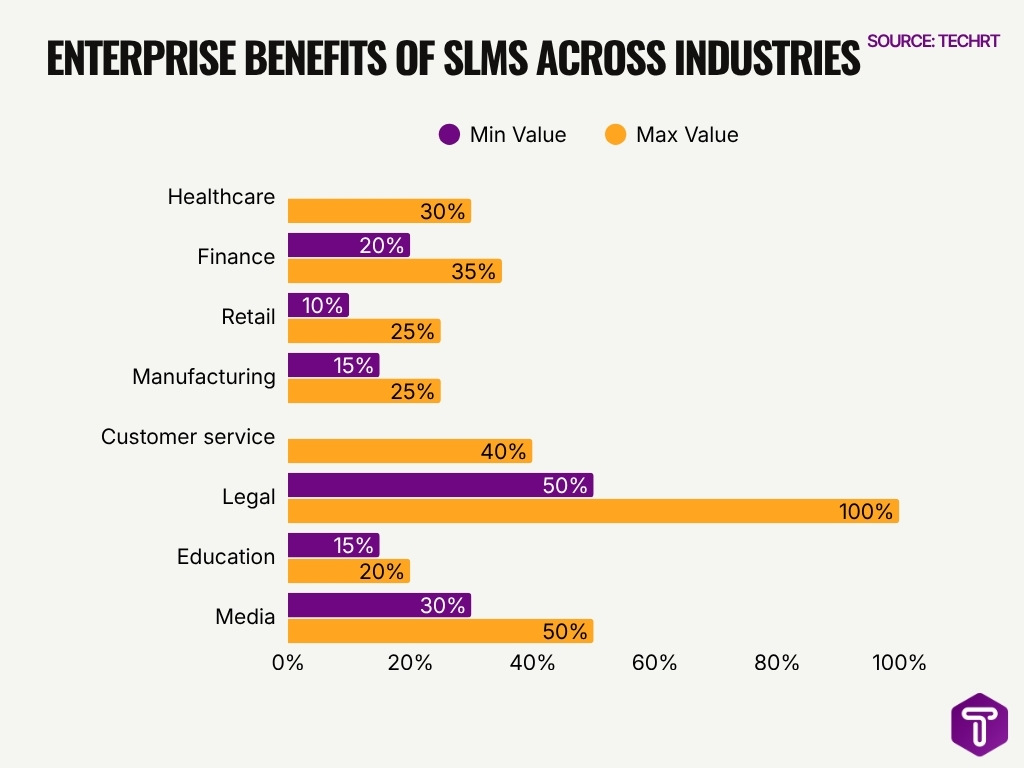

Enterprise Adoption of Small Language Models by Industry

- Around 48% of enterprises are exploring or deploying SLMs.

- Healthcare reduces processing time by up to 30%.

- Finance improves detection rates by 20%–35%.

- Retail increases conversions by 10%–25%.

- Manufacturing cuts downtime by 15%–25%.

- Customer service sees up to 40% cost savings.

- Legal firms reduce workload by 50%+.

- Education improves engagement by 15%–20%.

- Media increases efficiency by 30%–50%.

Model Compression, Distillation, and Quantization Statistics

- Model distillation can reduce model size by 40%–60% while retaining performance.

- Quantization techniques reduce memory usage by 50%–75%.

- Pruning methods remove up to 30% of model parameters.

- Distilled SLMs can achieve 2x faster inference speeds.

- Quantized SLMs run efficiently on CPUs and edge devices.

- LoRA reduces training costs by up to 90%.

- Compression techniques lower storage requirements by 60%–80%.

- Combined optimization methods improve efficiency by up to 10x.

- Sparse models reduce compute requirements by 20%–50%.

Inference Latency and Throughput Metrics for SLMs

- SLMs deliver inference latency of <100 milliseconds.

- Throughput can reach 100–500 tokens per second on modern GPUs.

- CPU-based inference achieves 10–50 tokens per second.

- SLMs reduce latency by 5x to 20x compared to LLMs.

- Edge-optimized SLMs maintain real-time performance under 1 second.

- Batch processing increases throughput by 2x–4x.

- Quantized models improve inference speed by 30%–60%.

- SLMs support hundreds of concurrent requests per second.

- Memory-efficient models ensure consistent response times.

Hardware and Resource Requirements to Run SLMs

- Most SLMs run efficiently on a single GPU with 16GB–24GB VRAM, eliminating multi-GPU dependency.

- Smaller SLMs can operate on standard CPUs with 8GB–16GB RAM, avoiding dedicated accelerators.

- Edge devices support SLMs with a memory footprint under 6GB, enabling on-device processing.

- Training SLMs requires up to 10× less compute power compared to large language models.

- Quantized SLMs reduce GPU memory usage by up to 75%, improving deployment efficiency.

- SLM deployment removes the need for distributed computing infrastructure in 100% of small-scale use cases.

- Inference servers for SLMs cost 70%–90% less than those required for LLMs.

- Embedded systems can run ultra-small SLMs on chips consuming under 10W of power.

- Resource-efficient SLMs enable 100% offline AI functionality in constrained environments.

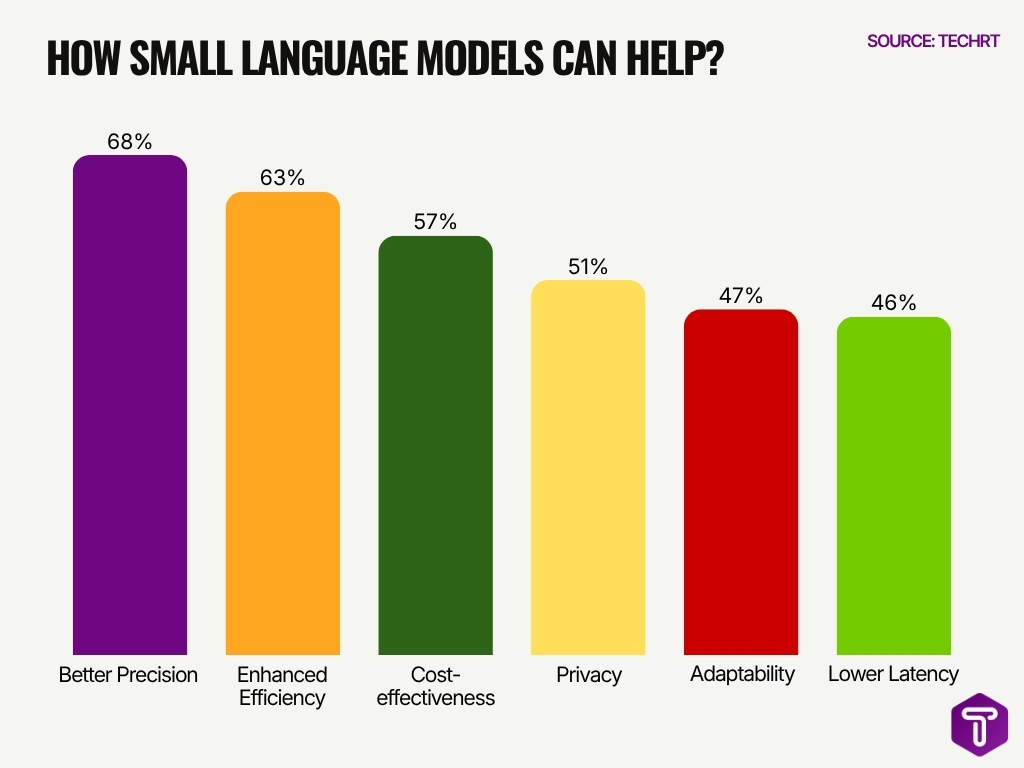

Key Advantages of Small Language Models (SLMs)

- Better Precision is the top advantage of Small Language Models, with 68% share, showing that SLMs are valued for delivering more targeted and accurate outputs in specific use cases.

- Enhanced Efficiency ranks second at 63%, highlighting that SLMs can process tasks faster and with fewer computational resources than larger models.

- Cost-effectiveness accounts for 57%, making it one of the strongest adoption drivers for businesses looking to reduce AI deployment and inference costs.

- Privacy stands at 51%, indicating that SLMs are increasingly preferred for on-device, private, or enterprise-controlled AI applications.

- Adaptability holds a 47% share, suggesting that SLMs are useful for domain-specific customization and fine-tuning.

- Lower Latency is reported by 46%, showing that SLMs are well-suited for real-time applications where fast response times are important.

- Overall, the data shows that SLMs are mainly valued for precision, efficiency, affordability, and privacy, making them practical for enterprise, edge, and specialized AI deployments.

Energy Efficiency and Power Consumption of SLMs

- SLMs consume 50%–90% less energy than large models.

- Training emissions are reduced by over 80%.

- Edge SLMs operate within 5W–20W power envelopes.

- Data centers report 30%–60% energy savings.

- Efficient architectures reduce FLOPs by up to 70%.

- Quantization lowers energy consumption by 40%–60%.

- SLMs support sustainable AI deployments.

- Low-power chips extend battery life by 20%–40%.

- Enterprises reduce AI energy costs by over 50% annually.

Cost Savings of SLMs vs LLMs in Deployment

- SLM deployment reduces costs by 5x to 20x compared to LLMs.

- Inference costs range between $0.10 and $0.50 per 1M tokens.

- Enterprises report up to 80% reduction in cloud spending with SLMs.

- On-premise deployment saves thousands of dollars per month in infrastructure costs.

- Training costs for SLMs are often under $1M, significantly lower than LLMs.

- Maintenance costs drop by 60%–90% due to simpler model architectures.

- SLMs enable more predictable cost structures, reducing budget volatility by 40%+.

- Edge deployment reduces bandwidth costs by 30%–70% compared to cloud reliance.

- Hybrid SLM-LLM systems cut overall operational costs by 40%–60%.

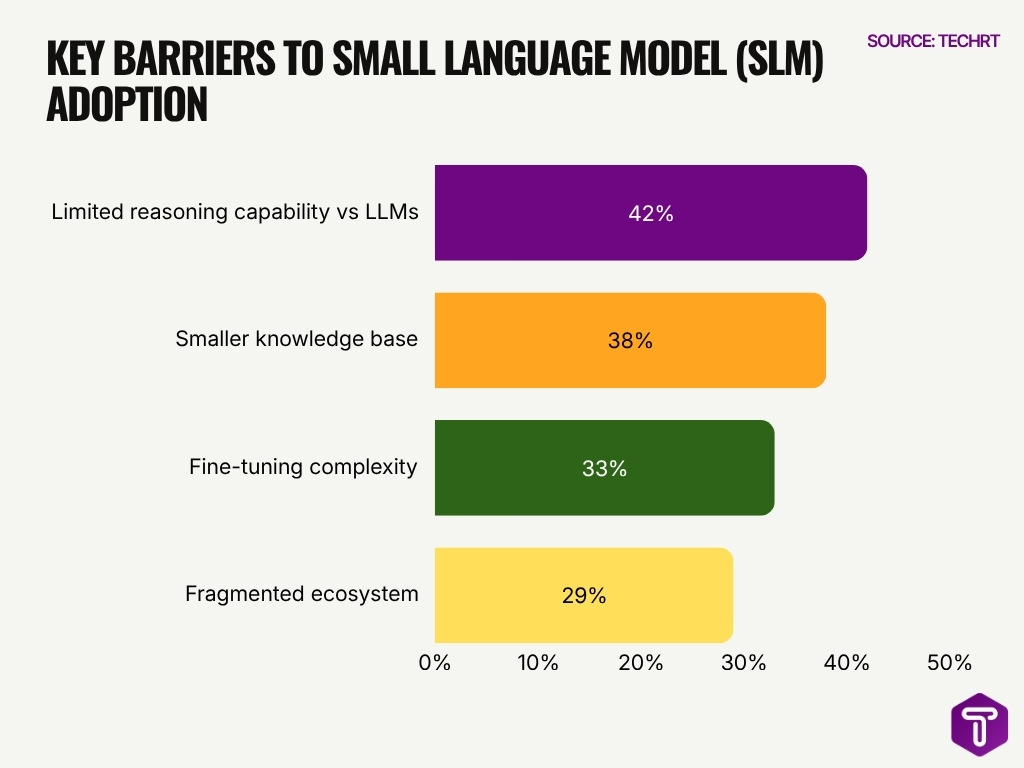

Key Challenges in Small Language Model (SLM) Adoption

- Limited reasoning capability vs LLMs is the biggest challenge, reported by 42% of respondents, showing that SLMs still struggle with complex reasoning tasks compared to larger models.

- Smaller knowledge base ranks second at 38%, indicating that SLMs may provide less comprehensive answers due to fewer parameters and a narrower training scope.

- Fine-tuning complexity affects 33% of users, suggesting that adapting SLMs for specific business or industry use cases can still require technical expertise.

- Fragmented ecosystem is cited by 29%, highlighting concerns around too many competing tools, frameworks, and model options in the SLM market.

- The data shows that while SLMs are attractive for cost efficiency, speed, and on-device deployment, adoption is limited by performance, customization, and ecosystem maturity challenges.

- Overall, the biggest barrier is not deployment cost but capability confidence, as 42% point to reasoning limitations as the top adoption concern.

On-Device and Edge Deployment Statistics for SLMs

- Over 65% of new AI applications in 2026 include edge capabilities.

- SLMs run on devices with <6GB RAM requirements.

- Edge AI adoption is growing at 20%+ CAGR.

- On-device AI reduces latency by up to 90%.

- SLM-powered assistants operate offline.

- Over 40% of new vehicles will include embedded AI by 2027.

- IoT devices reduce cloud dependency by 50% or more.

- Edge deployment lowers data transfer by up to 80%.

- SLMs power real-time applications across industries.

Open-Source vs Proprietary Small Language Model Adoption

- Open-source SLMs represent 62.8% of available models versus 37.2% proprietary.

- Developers trust open-source AI models 66% for learning and 61% for development work.

- Open-source SLMs cost 86% less at $0.83 per million tokens vs $6.03 proprietary.

- Proprietary SLMs power 87% of enterprise workloads in regulated sectors like finance.

- Hybrid AI stacks combining open-source and proprietary SLMs are adopted by 37% enterprises.

- Open-source communities release model updates weekly, versus proprietary’s quarterly cycles.

- Over 50% enterprises use open-source AI tools, driving SLM customization in data science.

- Hugging Face hosts thousands of open-source SLM variants like Gemma 3 and Phi-4.

- Proprietary SLMs lead elite benchmarks, scoring up to 70 quality vs open-source 61.

- SLM market grows at 23.6–28.7% CAGR to $58B by 2034, favoring open-source edge.

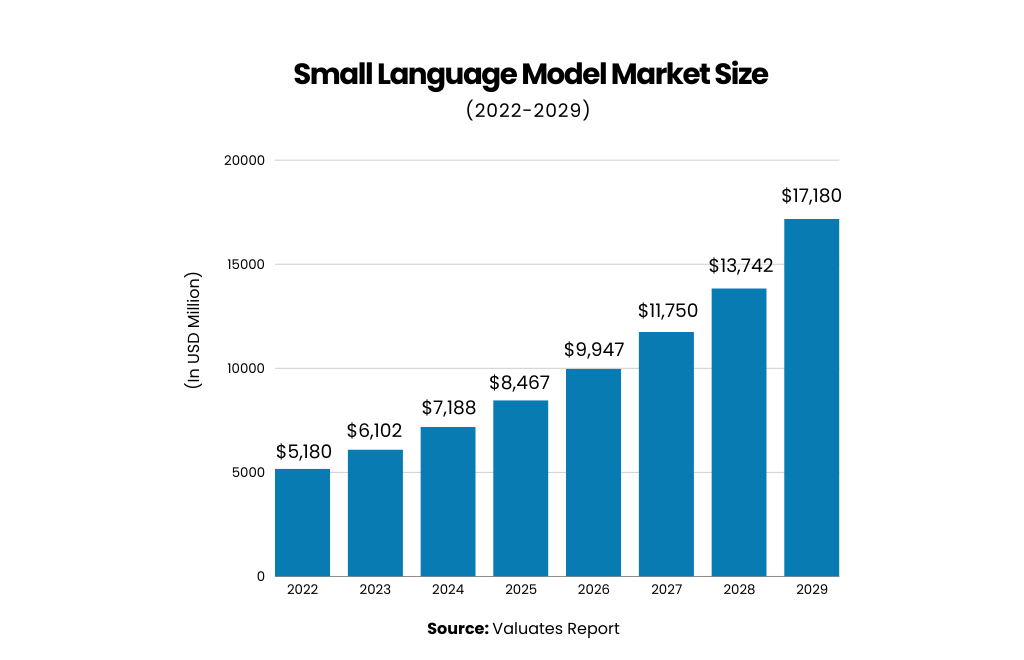

Small Language Model Market Size Growth

- The Small Language Model market is projected to grow from USD 5,180 million in 2022 to USD 17,180 million by 2029.

- The market is expected to add nearly USD 12,000 million in value between 2022 and 2029.

- In 2026, the Small Language Model market size is estimated to reach USD 9,947 million, moving close to the USD 10 billion mark.

- The market is forecast to cross USD 11,750 million in 2027, showing strong adoption of compact and cost-efficient AI models.

- By 2028, the market is expected to reach USD 13,742 million, indicating continued demand for lightweight AI deployment.

- The highest projected value appears in 2029, when the market is expected to reach USD 17,180 million.

- Overall, the data shows a consistent upward trend, highlighting growing interest in Small Language Models for enterprise AI, edge devices, and efficient AI applications.

Privacy, Security, and On-Device Data Protection Benefits

- 65%–80% of SLM workloads are processed on-device, minimizing external data exposure.

- Enterprises report up to 70% improvement in privacy compliance after adopting SLM-based architectures.

- On-device AI reduces data breach risks by 30%–50% compared to cloud-dependent models.

- Around 60% of enterprises implement zero-data retention policies using SLM deployments.

- Edge AI enables real-time processing in <50 ms, improving secure decision-making speed by 40%.

- Reducing third-party APIs cuts vendor-related security risks by 35%–55%.

- Devices with secure enclaves improve end-to-end data protection by 45% in sensitive applications.

- Automated SLM audits shorten compliance audit cycles by 25%–30%.

- Over 75% of SLM implementations align with GDPR, HIPAA, and global data regulations.

Future Trends and Growth Projections for Small Language Models

- The SLM market is projected to grow at 28.7% CAGR from 2025 to 2032, reaching $5.45 billion.

- Another forecast puts the global SLM market at $17.18 billion by 2030, with 17.8% CAGR.

- A separate estimate values the SLM market at $6.5 billion in 2024 and $64 billion by 2034.

- Gartner expects small, task-specific AI models to be used 3x more than general-purpose LLMs by 2027.

- Edge AI spending is expected to reach $61.63 billion by 2028 at a 26% CAGR.

- Global edge computing services spending is projected to hit $380 billion by 2028, up from about $261 billion in 2025.

- Model compression can reduce AI model sizes by 80%–95%, with some deployments reaching about 90%+ reduction.

- Compressed models can deliver around 70% lower inference costs and up to 10x faster deployment.

- Regulatory pressure is rising in 2025–2026, with the EU AI Act and the Colorado AI Act tightening AI privacy and governance rules.

- OECD notes a likely hybrid landscape by 2030, where generalist systems are supplemented by domain-specific models.

Frequently Asked Questions (FAQs)

How much cheaper are small language models compared to large language models?

SLMs can be 90% cheaper than LLMs, with costs around $0.01–$0.05 per 1M tokens vs $0.50–$2.00 for larger models.

What is the cost difference per query between SLMs and LLMs?

An SLM query can cost about $0.0004 per request vs $0.09 for LLMs, making it roughly 225x cheaper.

How much can businesses save monthly using SLMs instead of LLMs?

Processing 1 million conversations can cost $150–$800 with SLMs vs $15,000–$75,000 with LLMs.

What are the typical inference costs for SLMs compared to LLMs?

SLM inference costs about $0.10–$0.50 per 1M tokens, while LLMs range from $2 to $30 per 1M tokens.

How much can SLMs reduce large-scale processing costs?

Processing 1 trillion tokens can cost about $750,000 with SLMs vs up to $45 million with LLMs, a reduction of over 98%.

Conclusion

Small Language Models have moved from experimental tools to core components of modern AI infrastructure. Their ability to deliver near-LLM performance at a fraction of the cost makes them ideal for real-time applications, edge deployment, and privacy-sensitive environments. As industries shift toward efficient, scalable AI, SLMs will continue to play a central role in balancing performance, cost, and control.

From on-device assistants to enterprise automation, the data shows a clear trend: smaller, smarter models are driving the next phase of AI adoption.

Leave a comment

Have something to say about this article? Add your comment and start the discussion.