Artificial intelligence now powers a wide range of everyday and enterprise applications, from real-time fraud detection in banking systems to personalized recommendations on platforms like google.com. As organizations integrate AI into customer service, logistics, healthcare diagnostics, and software development, the underlying infrastructure required to support these systems continues to expand rapidly. Behind every AI-generated response or automated decision sits a network of high-performance data centers, specialized chips, and continuous computational workloads, all of which demand significant amounts of electricity.

This growing reliance on AI is reshaping global energy consumption patterns. Data centers that once handled routine cloud storage and web services now support energy-intensive tasks such as training large language models and running billions of inference queries daily. As a result, electricity demand from AI is rising faster than many national grids were designed to handle, prompting new investments in infrastructure, renewable energy, and efficiency technologies. This article breaks down the latest AI energy consumption statistics, helping you understand where energy is used, how fast it’s growing, and what it means for businesses, policymakers, and the future of sustainable technology.

Editor’s Choice

- Global data centers consumed ~415 TWh of electricity in 2024, accounting for about 1.5% of total global electricity use.

- Data center electricity demand grew by 17% in 2025, outpacing global electricity demand growth of 3%.

- AI could contribute up to 64% of new data center power demand by 2030.

- AI-optimized servers already account for 21% of data center energy use in 2025.

- Total data center electricity consumption is projected to reach ~980 TWh by 2030, more than doubling from 2025 levels.

- AI-related queries alone are estimated to consume ~15 TWh annually by 2025.

- In the U.S., data centers consumed about 183 TWh in 2024, representing over 4% of national electricity demand.

Recent Developments

- U.S. electricity demand is expected to hit record highs in 2026, driven largely by AI and data center growth.

- Meta has committed to securing 1 GW of solar energy from space-based systems to power AI data centers.

- Entergy plans $57 billion in infrastructure investment, partly to support AI data center expansion.

- The AI data center power consumption market reached $12.5 billion in 2025 and is projected to grow rapidly.

- Ocean-based AI data centers using wave energy are expected to launch by 2026.

- AI may account for up to 49% of total data center electricity consumption by 2025.

- Wholesale electricity prices near data centers have increased by as much as 267% over five years.

- AI infrastructure expansion is expected to require 14 GW of additional power capacity globally by 2030.

Global AI Energy Consumption Overview

- Global data center electricity usage reached ~415 TWh in 2024.

- This represents a 12% annual growth rate since 2017, significantly higher than global electricity growth.

- Data centers are projected to consume between 650–1,050 TWh by 2026.

- AI workloads currently account for 5%–15% of total data center electricity use.

- By 2030, AI could represent 35%–50% of data center electricity consumption.

- Global electricity supply for data centers is expected to exceed 1,000 TWh by 2030.

- AI-driven infrastructure expansion could push energy demand beyond 1,300 TWh by 2035.

- The U.S. alone hosts about 45% of global data center capacity, making it a dominant energy consumer.

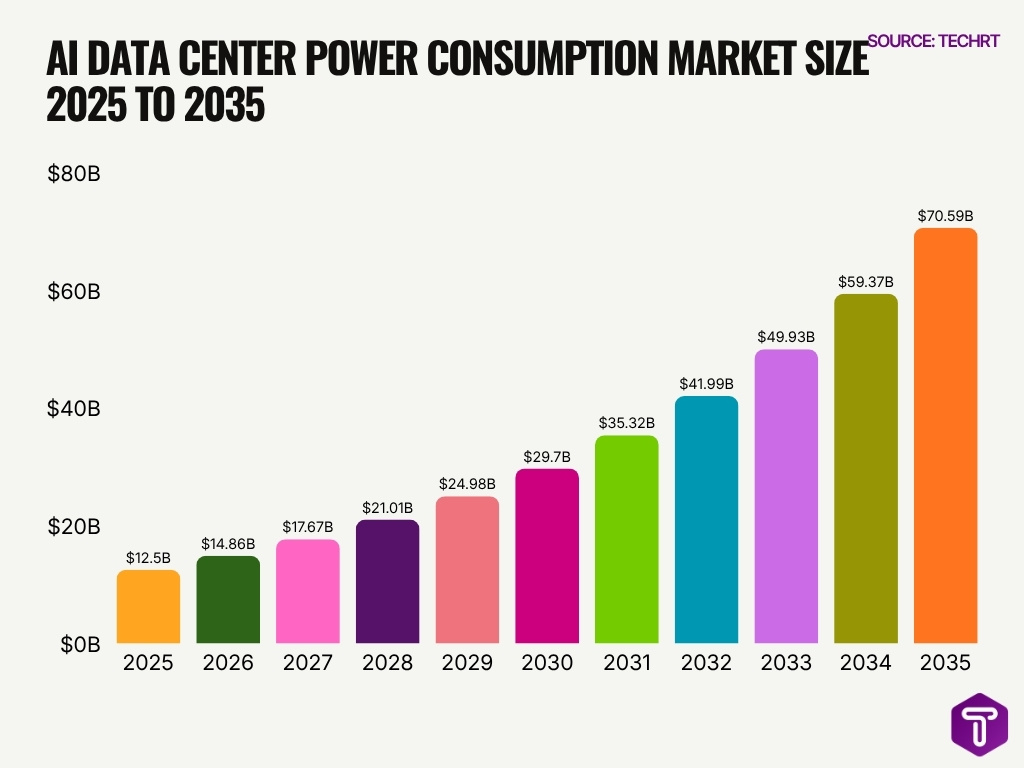

AI Data Center Power Consumption Market Growth

- The AI data center power consumption market is projected to grow from $12.50 billion in 2025 to $70.59 billion by 2035.

- This represents an overall increase of $58.09 billion over the forecast period.

- The market is expected to expand by more than 5.6 times between 2025 and 2035, showing the rising energy demand linked to AI infrastructure.

- By 2030, the market size is forecast to reach $29.70 billion, more than double its 2025 value.

- The market crosses the $40 billion mark in 2032, reaching $41.99 billion.

- Strong growth continues after 2032, with the market increasing to $49.93 billion in 2033 and $59.37 billion in 2034.

- The highest projected value appears in 2035, when the market is expected to reach $70.59 billion.

- The year-over-year increase becomes larger over time, rising from $2.36 billion between 2025 and 2026 to $11.22 billion between 2034 and 2035.

- The data highlights how expanding AI workloads, GPU-intensive computing, and large-scale data center deployment are likely to drive higher power consumption spending.

- Overall, the chart indicates a sustained upward trend, reflecting the growing role of AI data centers in global electricity and infrastructure demand.

AI Share of Global Electricity Use

- Data centers accounted for ~1.5% of global electricity consumption in 2024.

- AI-specific workloads may already contribute ~20% of total data center energy usage.

- AI could represent nearly half of data center power demand by late 2025.

- In the U.S., data centers account for 3%–4% of electricity demand today, driven by AI workloads.

- This share could rise to 8%–12% of U.S. electricity demand by 2030.

- Ireland’s data centers consume about 21% of national electricity, highlighting regional concentration.

- In Virginia (U.S.), data centers account for ~26% of electricity consumption.

- AI-driven compute growth could add 1% of global electricity demand by 2030 from leading firms alone.

AI vs Traditional Data Center Energy Demand

- AI servers are expected to drive 64% of total data center energy growth by 2030.

- Traditional servers contribute only ~9% of future energy demand growth.

- Supporting infrastructure (cooling, networking) accounts for ~21% of energy growth.

- AI-optimized servers made up 21% of power usage in 2025, surpassing many traditional workloads.

- By 2030, AI servers could represent 44% of total data center power usage.

- AI workloads require significantly higher compute density, increasing energy use per rack by up to 10x compared to traditional servers.

- Generative AI training clusters consume far more electricity than standard enterprise applications due to GPU-heavy workloads.

- AI inference demand is scaling faster than traditional cloud workloads, further shifting energy consumption patterns.

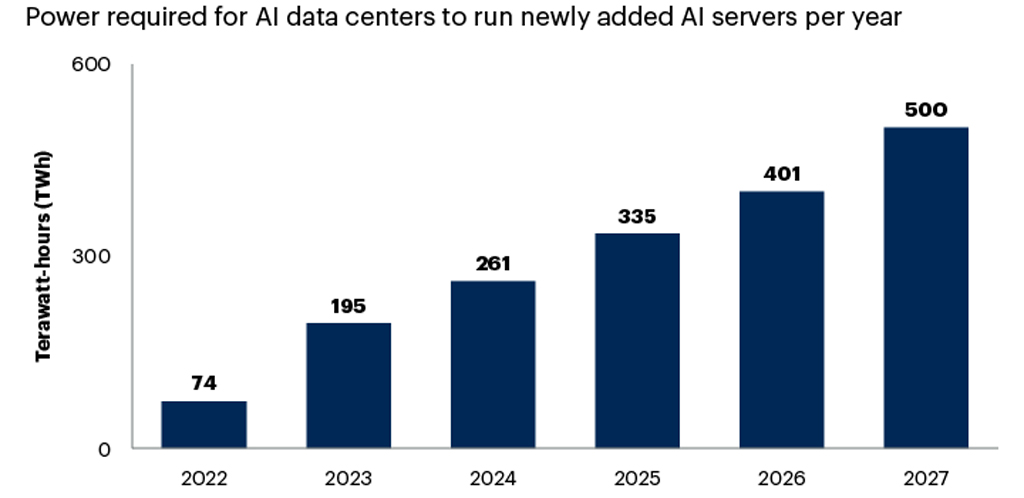

AI Data Center Power Demand Is Rising Rapidly

- The power required to run newly added AI servers is projected to increase from 74 TWh in 2022 to 500 TWh in 2027.

- This represents an increase of 426 TWh over five years.

- In percentage terms, AI server power demand is expected to grow by around 576% between 2022 and 2027.

- The sharpest year-over-year increase occurred between 2022 and 2023, when demand rose from 74 TWh to 195 TWh.

- By 2024, newly added AI servers required an estimated 261 TWh, showing continued growth in AI infrastructure energy needs.

- Power demand is projected to reach 335 TWh in 2025, crossing the 300 TWh mark for the first time in the dataset.

- By 2026, AI server power demand is expected to climb further to 401 TWh.

- The highest projected value is 500 TWh in 2027, highlighting the growing pressure AI workloads may place on global data center electricity consumption.

- From 2023 to 2027, annual power demand more than doubles, rising from 195 TWh to 500 TWh.

- The data suggests that rapid AI server deployment could make energy efficiency, renewable power sourcing, and data center optimization increasingly important priorities.

AI Training Energy Consumption Statistics

- Training a large AI model like GPT-scale systems can consume 1,200–1,500 MWh per training cycle.

- Training GPT-3 is estimated to have consumed ~1,287 MWh of electricity.

- Advanced multimodal AI models in 2025 require 2x–5x more energy than earlier NLP models.

- Training runs for cutting-edge models can emit over 500 metric tons of CO₂ equivalent.

- AI training workloads account for ~20%–30% of total AI energy consumption, with inference dominating overall usage.

- Large-scale training clusters can require tens of megawatts of continuous power during peak operations.

- Training time for modern LLMs has increased to weeks or even months, raising cumulative energy use.

- Energy consumption per training run has grown by ~10x every 1–2 years for frontier models.

- Distributed training across multiple data centers increases energy overhead by 10%–20% due to networking and redundancy.

AI Inference Energy Consumption Statistics

- AI inference accounts for ~70%–80% of total AI energy consumption, as models run continuously in production.

- A single large language model query can consume up to 10x more energy than a traditional search query.

- ChatGPT-style queries are estimated to use ~2.9 Wh per request, compared to 0.3 Wh for standard search.

- AI inference workloads are growing at over 30% annually, outpacing training workloads.

- Global inference energy demand could exceed 200 TWh annually by 2030.

- Edge AI inference reduces latency, but increases distributed energy usage across devices.

- Real-time AI applications like fraud detection and recommendation engines run millions of inferences per second globally.

- Optimization techniques such as model pruning can reduce inference energy consumption by up to 40%.

- AI inference energy intensity varies widely depending on model size, hardware, and workload type.

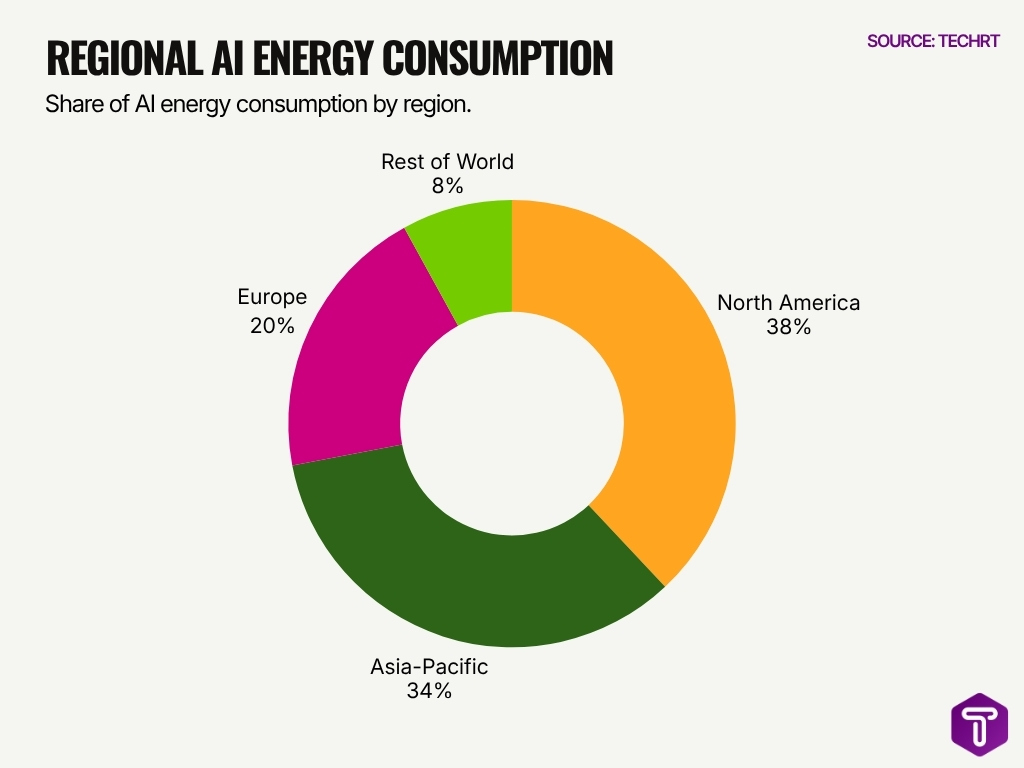

Regional AI Energy Consumption

- North America has the highest share of AI energy consumption at 38%, making it the leading region in AI-related power demand.

- Asia-Pacific follows closely with 34%, showing strong AI infrastructure growth and high data center activity across the region.

- Together, North America and the Asia-Pacific account for 72% of total regional AI energy consumption.

- Europe represents 20% of AI energy consumption, indicating a significant but smaller share compared to North America and Asia-Pacific.

- The Rest of the World contributes only 8%, showing a major gap in AI infrastructure and energy usage compared with leading regions.

- The data suggests that AI energy demand is heavily concentrated in advanced digital economies, especially North America and the Asia-Pacific.

- The difference between North America and Asia-Pacific is just 4 percentage points, showing that both regions are nearly equal in AI energy intensity.

Energy Use per AI Query or Task

- A single generative AI query consumes approximately 2–5 Wh of electricity, depending on model complexity.

- Traditional Google search queries consume about 0.3 Wh, highlighting the efficiency gap.

- Image generation tasks can use 5–20x more energy than text-based queries.

- Video generation AI models require significantly higher compute and energy per task, often exceeding 100 Wh per output.

- AI-powered recommendation systems process billions of queries daily, contributing to substantial cumulative energy use.

- Autonomous vehicle AI systems can consume 1–2 kWh per hour of operation due to continuous inference.

- Speech recognition tasks typically consume 0.1–1 Wh per request, depending on complexity.

- AI-assisted coding tools generate responses using multiple inference passes, increasing per-task energy consumption.

- Energy per AI task has decreased slightly due to hardware improvements, but overall demand continues to rise.

Power Requirements of AI Chips and Hardware

- NVIDIA H100 GPUs consume up to 700 watts per chip, significantly higher than previous generations.

- AI server racks can require 30–80 kW per rack, compared to 5–10 kW for traditional servers.

- High-performance AI clusters can exceed 100 MW of total power demand, comparable to small cities.

- Google TPUs are designed for efficiency, reducing energy per operation by up to 80% compared to CPUs.

- AI accelerators now account for over 50% of server power consumption in AI-focused data centers.

- Advanced cooling systems are required for high-density AI hardware, increasing overall power needs by 10%–20%.

- Semiconductor manufacturing for AI chips is also energy-intensive, adding to lifecycle energy costs.

- Custom AI chips improve efficiency but require a high upfront energy investment in fabrication.

- Power density in AI data centers has increased by 3x–5x over the past five years.

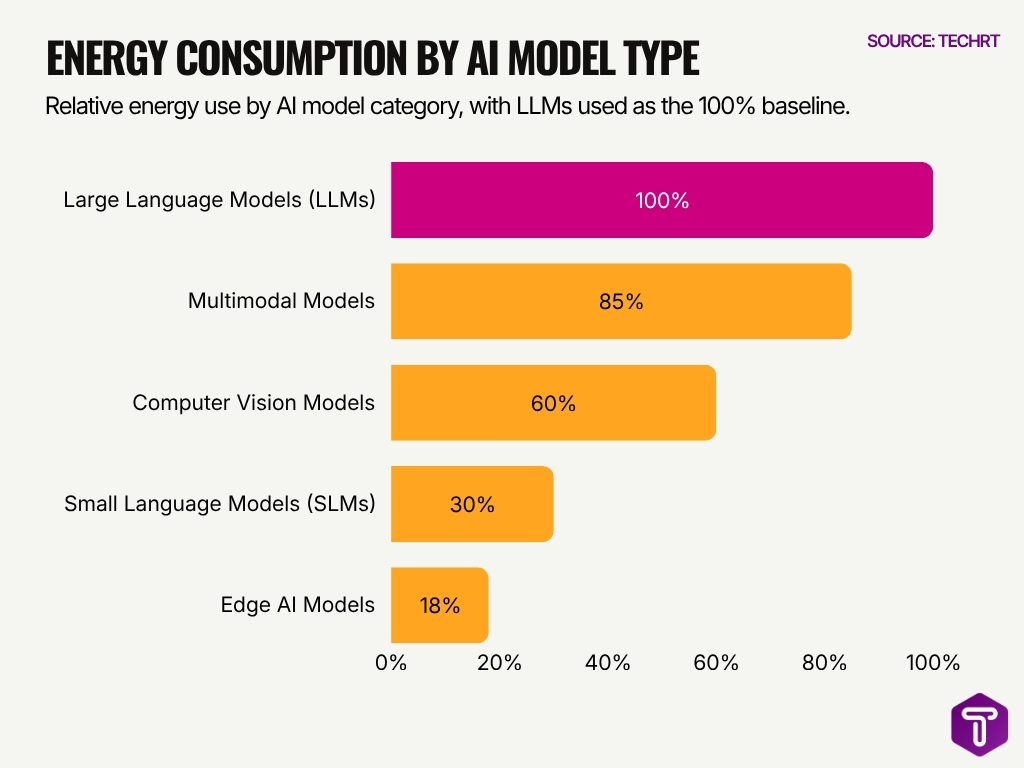

Energy Consumption by AI Model Type

- Large Language Models (LLMs) have the highest relative energy use at 100%, making them the baseline for comparison.

- Multimodal Models consume 85% relative energy, showing they are nearly as energy-intensive as LLMs due to processing multiple data types such as text, images, audio, and video.

- Computer Vision Models use 60% relative energy, which is 40 percentage points lower than LLMs, but still significant for image and video analysis workloads.

- Small Language Models (SLMs) consume only 30% relative energy, making them around 70% less energy-intensive than LLMs.

- Edge AI Models have the lowest relative energy use at 18%, highlighting their efficiency for on-device AI tasks.

- The data shows a clear energy gap between large-scale AI systems and optimized models, with LLMs using over 5.5 times more energy than Edge AI Models.

- Model size, task complexity, and deployment environment strongly influence AI energy consumption.

- The chart suggests that shifting suitable workloads from LLMs to SLMs or Edge AI Models can help reduce overall AI energy demand.

- Energy-efficient AI development is becoming increasingly important as the adoption of high-compute models continues to grow.

- For businesses, using the right model type for the task can reduce infrastructure costs, electricity demand, and carbon emissions.

Data Center Cooling and Infrastructure Energy Use

- Cooling systems account for 30%–40% of total data center energy consumption.

- Liquid cooling technologies can reduce cooling energy use by up to 50% compared to air cooling.

- AI data centers require higher cooling capacity due to dense GPU clusters generating 2–3× more heat.

- PUE for modern data centers averages 1.5, with best-in-class facilities reaching 1.1.

- Cooling-related water usage is rising, with large hyperscale facilities consuming up to 1.8 billion gallons annually.

- Immersion cooling can improve energy efficiency by 26.6%–80% of cooling energy.

- Backup power systems add 10%–15% to total energy infrastructure costs.

- AI data centers are increasingly collocated near renewable energy sources to cut cooling and transmission costs.

- Infrastructure energy use can equal or exceed compute energy in some AI workloads.

Carbon Emissions from AI Energy Consumption

- Global data centers emitted approximately 300 million metric tons of CO₂ in 2024, with AI contributing a growing share.

- Training a single large AI model can emit up to 500 metric tons of CO₂, equivalent to 100+ passenger cars annually.

- AI-related emissions could reach 1 billion tons annually by 2030 if current trends continue.

- Data center emissions account for ~0.9% of global energy-related CO₂ emissions.

- Generative AI workloads are estimated to increase emissions by 20%–30% in hyperscale data centers.

- Carbon intensity varies widely, with coal-heavy regions producing 2–3x higher emissions per kWh.

- AI inference at scale contributes significantly due to continuous operation, adding millions of tons of CO₂ annually.

- Tech companies are investing in carbon offsets and clean energy to mitigate AI emissions growth.

- Lifecycle emissions from AI include hardware manufacturing, adding 15%–25% to the total carbon footprint.

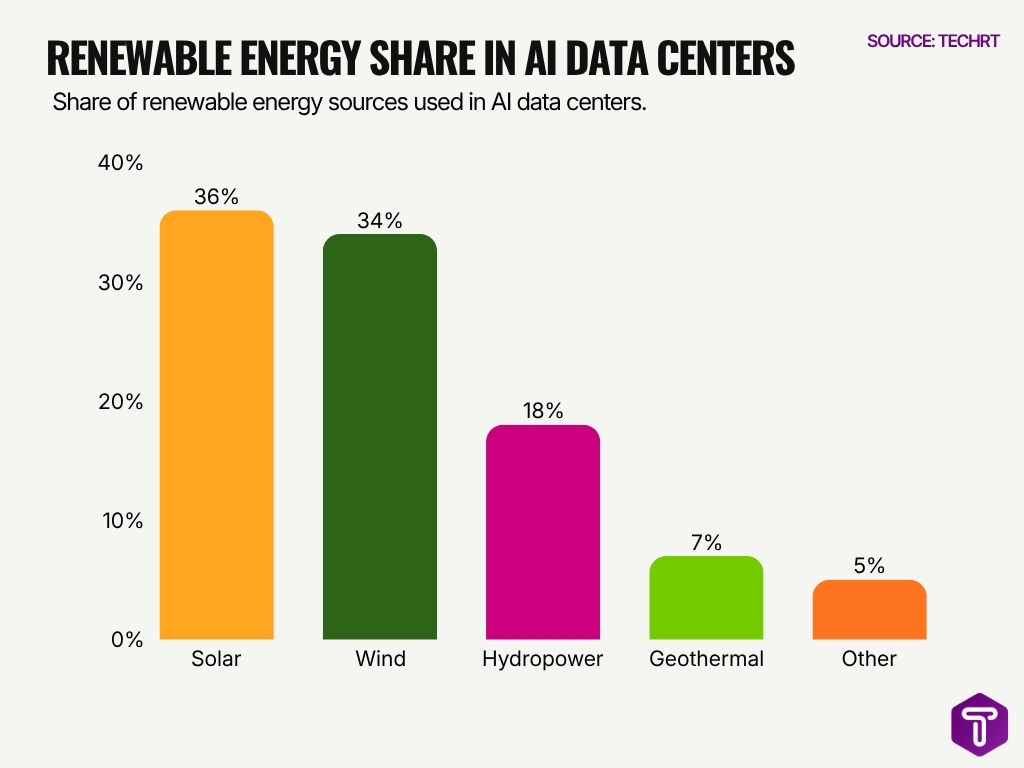

Renewable Energy Share in AI Data Centers

- Solar energy is the largest renewable source used in AI data centers, accounting for 36% of the total renewable energy share.

- Wind power follows closely with 34%, showing that wind and solar together dominate renewable energy use.

- Combined, solar and wind contribute 70% of the renewable energy mix in AI data centers.

- Hydropower represents 18%, making it the third-largest renewable source.

- Geothermal energy has a smaller share at 7%, but it still supports clean energy diversification.

- Other renewable sources make up the remaining 5% of the total share.

- The data shows that AI data centers are projected to rely heavily on solar and wind energy as primary renewable power sources.

- This renewable mix highlights the growing push to reduce the carbon footprint of AI infrastructure through cleaner energy sources.

Energy Efficiency Improvements in AI Hardware

- AI hardware efficiency has improved by ~10x over the past decade, reducing energy per computation.

- Specialized AI chips deliver up to 30x better performance per watt than CPUs.

- Google TPUs reduce energy usage by up to 80% for certain AI workloads.

- Quantization techniques cut energy consumption by 20%–50% in AI models.

- Hardware improvements offset 15%–20% of annual energy demand growth.

- Advanced semiconductors boost efficiency by ~25% vs older chips.

- NVIDIA A100 GPUs improve energy efficiency 5x on average for AI apps.

- Brain-inspired chips reduce AI energy use by more than 70%.

Impact of AI on National Power Grids

- Global data center electricity demand is projected to more than double to about 945 TWh by 2030, driven heavily by AI workloads.

- AI‑driven data centers are already consuming roughly 1.5% of global electricity, with IEA projects showing this could rise sharply this decade.

- AI‑linked electricity consumption in data centers is expected to triple by 2030, adding hundreds of terawatt‑hours to annual grid demand.

- Analysts estimate global power demand from data centers will grow by up to 165% by 2030, with AI accounting for a rapidly expanding share.

- Some AI‑focused data centers now operate at campus‑level peak loads as high as 1 GW, intensifying pressure on local transmission and distribution grids.

- In the United States, AI data centers may consume 8–12% of total electricity demand by 2030, up from about 3–4% today.

- Northern Virginia’s grid faces acute strain because data centers already draw around 26% of the region’s electricity, with AI further amplifying load.

- Peak electricity demand from AI workloads has risen by roughly 17% in 2025, far exceeding the global average increase of 3%.

- Utilities globally are committing tens of billions of dollars in grid upgrades to handle AI‑driven load growth and peak demand spikes.

- AI‑enabled smart grid and demand‑response tools have already helped reduce transmission losses by around 5–10% in modern power systems.

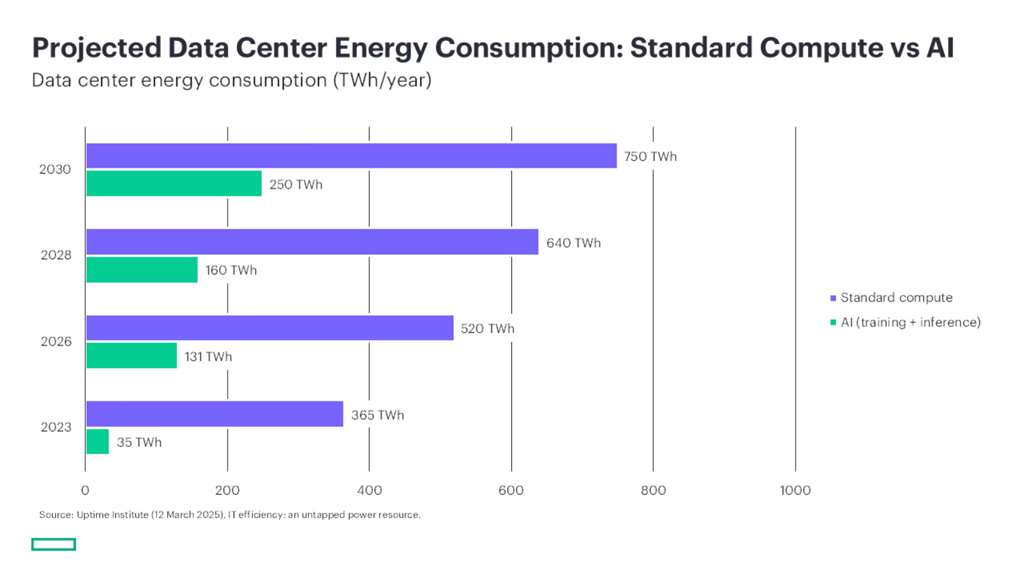

Projected Data Center Energy Consumption Trends

- Standard compute energy consumption is projected to rise from 365 TWh/year in 2023 to 750 TWh/year by 2030.

- AI energy consumption from training and inference is projected to grow sharply from 35 TWh/year in 2023 to 250 TWh/year by 2030.

- Between 2023 and 2030, standard compute energy use is projected to increase by about 105%.

- AI-related data center energy use is projected to increase by more than 7 times between 2023 and 2030.

- By 2030, AI workloads are projected to consume around one-third as much energy as standard compute workloads.

- The data suggests that AI will become a major driver of future data center electricity demand.

- While standard computing remains the larger energy consumer, AI shows a much faster projected growth rate.

- The rising figures highlight the need for energy-efficient AI models, optimized data center infrastructure, and cleaner power sources.

Frequently Asked Questions (FAQs)

How much electricity do global data centers consume in 2024?

Global data centers consume about 415 TWh, representing ~1.5% of total global electricity use.

How fast did data center electricity demand grow in 2025?

Data center electricity demand increased by 17% in 2025, with AI-focused centers growing even faster at around 50%.

What is the projected global data center energy consumption by 2026?

Data center electricity use is projected to reach ~1,050 TWh by 2026, more than doubling from earlier years.

What share of data center power do AI-optimized servers use?

AI-optimized servers account for about 21% of total data center power in 2025, expected to rise to 44% by 2030.

What is the expected CAGR of the AI data center power market?

The AI data center power consumption market is projected to grow at a CAGR of ~18.9% from 2026 to 2035.

Conclusion

AI energy consumption is no longer a niche concern; it now sits at the center of global energy strategy. From training massive models to running billions of daily inferences, AI systems are reshaping how electricity is produced, distributed, and consumed. While efficiency gains in hardware and the shift toward renewable energy offer some relief, the pace of AI adoption continues to outstrip these improvements.

Looking ahead, businesses, policymakers, and energy providers must align strategies to balance innovation with sustainability. Understanding these statistics helps decision-makers plan infrastructure, manage costs, and reduce environmental impact. As AI continues to scale, its energy footprint will remain a defining factor in how the technology evolves.

Leave a comment

Have something to say about this article? Add your comment and start the discussion.